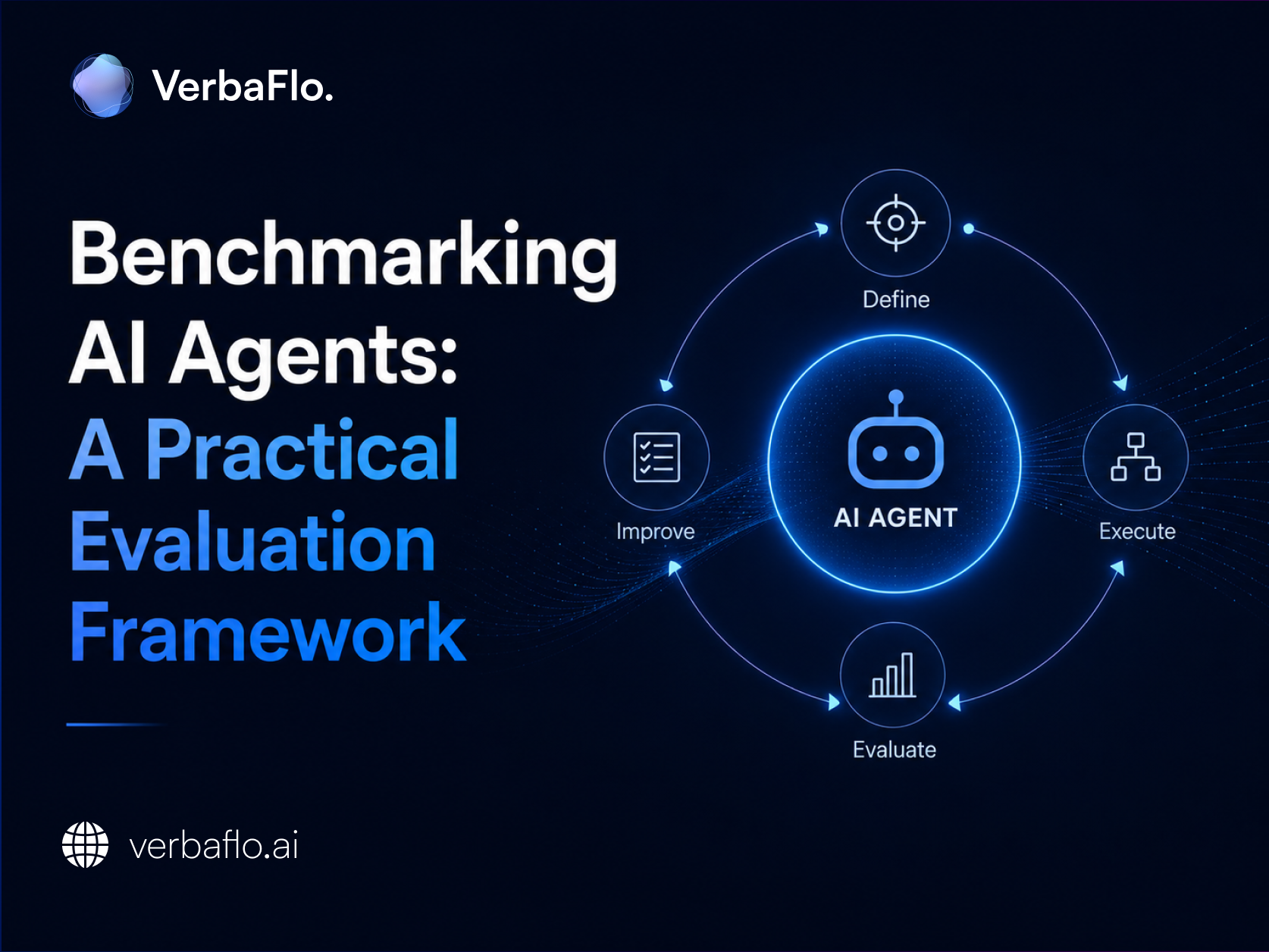

Benchmarking AI Agents: A Practical Evaluation Framework

Traditional LLM benchmarks fall short when evaluating AI agents. Explore the four pillars of agent evaluation, key frameworks like GAIA and SWE-bench, and a five-step pipeline to measure real-world performance reliably.

Benchmarking AI Agents: A Practical Evaluation Framework

Evaluating AI systems has become more complex as businesses move from standalone models to autonomous workflows. Traditional AI performance benchmarks were designed for static outputs. They do not fully capture how agents behave in real-world environments.

This is where AI agent evaluation becomes critical. Agents do not just generate responses. They take action, interact with tools, and perform multiple steps. Measuring their performance requires a different approach, one that focuses on outcomes, reliability, and execution, not just accuracy.

This guide provides a functional framework for benchmarking AI agents, detailing the limitations of current metrics, strategies for effective assessment, and methods for developing evaluation systems that reflect real-world operational conditions.

Why Standard LLM Benchmarks Fail for Agents

Most AI performance benchmarks were built to evaluate language models, not agents. They measure accuracy on static prompts, single-turn responses, and predefined datasets. While such benchmarks are effective for evaluating models, they are insufficient for assessing agents.

The operational nature of agents is distinct: they plan, execute actions, utilise tools, and refine their approach based on preliminary findings. Consequently, performance cannot be judged by a solitary output, as it represents the culmination of a multi-step process.

This creates four clear gaps in traditional evaluation:

- Single-step vs multi-step execution

Benchmark tests isolated responses. Agents solve tasks across multiple steps. A correct final answer can still hide inefficient or incorrect intermediate decisions. - No tool interaction

Standard tests ignore how agents use APIs, databases, or external systems. In production, most failures occur here. - Lack of real-world context

Benchmarks rely on fixed datasets. Agents operate in dynamic environments where inputs change, dependencies fail, and conditions evolve. - No measurement of reliability

Traditional metrics focus on correctness. Agent systems need to be evaluated on consistency, error recovery, and the ability to complete tasks end-to-end.

This is why AI agent evaluation cannot rely solely on LLM benchmarks. It needs to account for execution, decision-making, and system behaviour over time.

The Four Pillars of AI Agent Evaluation

A strong AI agent evaluation framework is built on four clear pillars. These focus on how well an agent performs in real scenarios, not just how it responds in isolation.

Task completion

At the most basic level, the agent needs to get the job done. This means successfully achieving the user’s goal, whether that is resolving a query, completing a workflow, or retrieving the right information. If the task is not completed, the interaction does not deliver value.

Process integrity

It is not just about the outcome, but also how the agent gets there. The agent should follow the right steps, stay within defined boundaries, and avoid unnecessary or incorrect actions. A structured and controlled process builds trust and reduces risk.

Tool use

Most agents rely on external systems such as APIs, databases, or internal tools. The agent should be able to choose the right tool for the task and use it correctly. Poor tool usage often leads to delays, errors, or incomplete results.

Reliability

Consistency matters. The agent should perform reliably across repeated interactions, even when inputs vary slightly. Users expect predictable behaviour, not outcomes that change each time for the same request.

Together, these pillars create a practical approach to AI performance benchmarks. They ensure evaluation is grounded in real-world performance, where consistency and execution matter most.

Standard Benchmarks Explained: GAIA, SWE-bench, WebArena, MINT

Standard AI agent benchmarking frameworks are evolving to reflect real-world execution. Unlike traditional AI performance benchmarks, these focus on how agents plan, act, and complete tasks across environments. Here are four widely used benchmarks and what they measure:

GAIA: General Assistant-Style Agent Tasks

GAIA assesses agent capabilities through complex, practical assignments that mirror the demands placed on proficient digital assistants. These operations typically involve multi-stage processes, including web navigation, document retrieval, computational tasks, data interpretation, and the synthesis of disparate information streams.

The utility of the GAIA framework lies in its rejection of superficial outputs; it requires that an agent arrive at accurate conclusions through logical deduction and the effective use of tools. Consequently, it serves as a critical instrument for vetting versatile assistants intended for diverse corporate applications.

SWE-bench: Real Software Engineering Tasks

SWE-bench focuses on coding agents. It tests whether an agent can fix real GitHub issues from open-source repositories. The agent receives an issue description and the existing codebase, then produces a working code change.

This benchmark is practical because it measures real execution, not theoretical coding knowledge. The final score depends on whether the issue is actually resolved. For engineering teams, SWE-bench is useful for evaluating agents for debugging, code editing, and development support.

WebArena: Web Navigation and Workflow Completion

WebArena evaluates agents that interact with websites and web apps. The agent may need to complete tasks such as updating a page, adding an item to a cart, creating a post, or changing settings inside a platform.

The strength of WebArena lies in its ability to test action accuracy. It not only checks whether the agent understands the task. It checks whether the system's final state is correct. This makes it useful for agents who need to operate inside browser-based tools or SaaS platforms.

MINT: Multimodal and Interactive Task Evaluation

MINT-style benchmarks focus on agents that work across visual and interactive environments. These agents need to understand screens, buttons, layouts, documents, and user interfaces before taking action.

This matters because many real-world tasks are not text-only. An agent may need to open a file, interpret a dashboard, click through an interface, or complete a workflow using visual cues. MINT helps evaluate whether an agent can operate in these more complex, multimodal settings.

Together, these benchmarks show why AI agent evaluation needs to be practical. The real question is not whether an agent can generate a good answer. It is whether it can complete the task accurately, safely, and consistently.

How to Build Your Own Evaluation Pipeline (5 Steps)

Public benchmarks are useful for comparison, but they do not reflect how your agent performs in your business environment. A strong AI agent benchmarking approach needs to be tailored to your workflows, users, and systems.

Here is a simple, practical way to build your own evaluation pipeline:

Step 1: Focus on outcomes, not responses

Start by defining what success looks like. The goal is not to judge how the agent sounds, but whether it completes the task correctly. For example, did it update the system, resolve the request, or trigger the right action?

Step 2: Create a safe testing environment

Avoid testing on live data. Set up controlled environments where the agent can operate freely without risk. This allows you to test real actions while keeping systems and data secure.

Step 3: Simulate real user interactions

Testing should reflect how users actually behave. Instead of relying only on manual checks, create realistic scenarios that include different user intents, edge cases, and multi-step conversations.

Step 4: Test for consistency

Run the same scenario multiple times. A single successful outcome is not enough. The agent should perform reliably across repeated attempts, even when the inputs vary slightly.

Step 5: Make the evaluation continuous

Evaluation should not be a one-time activity. Every update, whether it is a prompt change or a system improvement, should be tested. This helps catch issues early and ensures performance remains stable over time.

A well-structured pipeline makes AI agent evaluation consistent and actionable. It shifts the focus from one-off testing to ongoing performance, which is essential for building reliable systems at scale.

Evaluation Tools: LangSmith, DeepEval, Galileo, Langfuse

Building an AI agent evaluation pipeline from scratch can be time-consuming. This is where specialised tools make a difference. They help teams track performance, test behaviour, and improve reliability without starting from zero.

Here are four widely used platforms:

LangSmith

LangSmith focuses on visibility. It shows exactly how your agent is making decisions, step by step. You can see which tools were used, how the flow progressed, and where things went wrong. This makes it easier to debug and improve performance.

DeepEval

DeepEval works like a testing framework for AI systems. It allows you to create structured tests for your agent and evaluate outputs against clear criteria such as accuracy and relevance. This helps bring consistency to how performance is measured.

Galileo

Galileo is designed for production environments. It focuses on monitoring agent behaviour, identifying issues such as incorrect or ungrounded responses, and helping teams address them before they affect users.

Langfuse

Langfuse flexibly combines tracking and evaluation. It is particularly useful for teams building custom workflows, as it allows you to analyse multi-step interactions and measure performance across the full agent lifecycle.

These tools simplify AI agent benchmarking by making evaluation more structured and repeatable. Instead of relying on manual checks, teams can build a system that continuously tracks how agents perform and where they need improvement.

Common Benchmarking Mistakes to Avoid

Setting up an AI agent benchmarking process is not just about what you measure, but how you measure it. A few common mistakes can make results look better than they actually are.

Relying too much on automated scoring

Using another model to judge your agent’s responses can be convenient, but it is not always reliable. These evaluations can be subjective and inconsistent. Wherever possible, focus on clear, outcome-based checks. Did the agent complete the task correctly? That is a more dependable measure.

Testing only ideal scenarios

Agents often perform well when everything is clean and predictable. Real users are not. They ask unclear questions, change their intent, and introduce unexpected inputs. Your evaluation should reflect this. Include edge cases, incomplete data, and difficult interactions to understand how the agent behaves under pressure.

Ignoring response time

Performance is not just about accuracy. Speed matters. An agent that takes too long to respond or act will create a poor user experience, even if the outcome is correct. Measuring how quickly the agent responds and completes actions should be part of your evaluation.

Avoiding these mistakes makes your AI agent evaluation more realistic and more useful. It ensures you measure performance in a way that reflects the actual user experience, not just controlled test conditions.

Ready to hear it for yourself?

Get a personalized demo to learn how VerbaFlo can help you drive measurable business value.

You may also like

Ready to hear it for yourself?

Get a personalized demo to learn how VerbaFlo can help you drive measurable business value.